Tutorial – We’ve introduced a new pose editor to MocapX 2.0 called PoseBoard.

We’ve introduced a new editor to the previously announced MocapX 2.0 called PoseBoard. In this tutorial, we are going to explain why it is useful and how to use it.

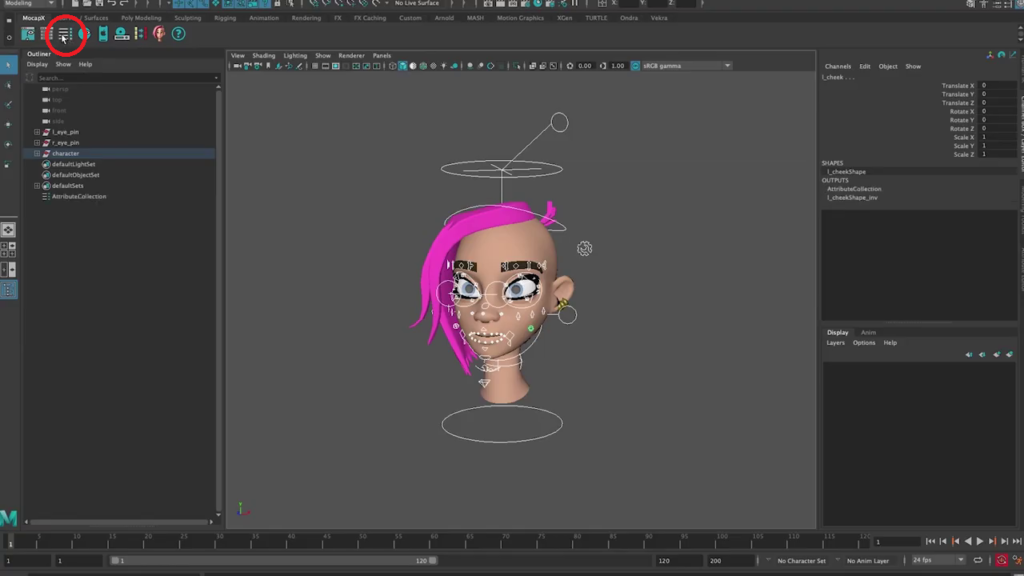

When you open our demo character Lucy in Maya, you’ll see a full rig and a lot of controllers.

So what we want to do here is select all of the controllers and add them to our Attribute Collection. We can start by hiding everything and showing just the controllers. Let’s select all of them and create an Attribute Collection by clicking on the icon on the shelf.

Now if we opened the Poselib editor, we would typically have to go to our online documentation, find the pose we want to create and then go back to Maya and recreate it. This would be a tedious process as you would need to constantly switch back and forth between Maya and your browser. So for MocapX 2.0 we came up with PoseBoard to make things easier.

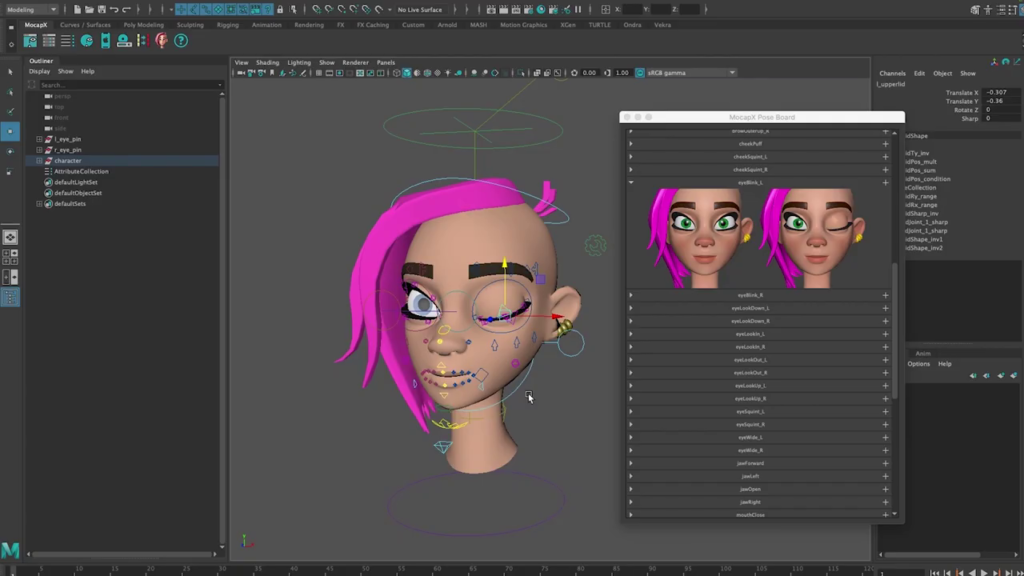

So let’s go back to Maya. Open PoseBoard from the Shelf. Now what we see is a list of all the expressions that are currently tracked and supported by MocapX and that can be matched to your rig or model using Blendshapes.

In the PoseBoard editor you can go through all of the poses, see how they look and easily create them. So for example let’s create eyeBlink Left. First, find the pose. Then, adjust the rig so the character has their left eye closed. And now simply click on the add button.

Now when we open the PoseLib editor we can see that our first pose is there. We can test it by moving the slider to the right.

We can continue by creating the rest of the poses. As you can see in PoseBoard, once a pose is created it turns grey. This indicates that the pose is already on the PosLib list. If we delete the pose from the PoseLIb editor, it will turn on again in PoseBoard.

So let’s fast forward and jump to a scene where all the poses have already been created. Now all we have to do is simply create a realtime device, connect to your iPhone or iPad, click auto connect in PoseLib and we are live streaming the motion capture data.

Download MocapX 2.0 and the MocapX app now!